A technical paper titled “WWW: What, When, Where to Compute-in-Memory” was published by researchers at Purdue University.

Abstracto:

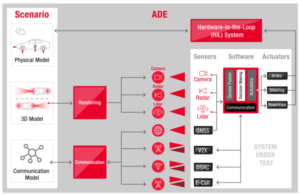

“Compute-in-memory (CiM) has emerged as a compelling solution to alleviate high data movement costs in von Neumann machines. CiM can perform massively parallel general matrix multiplication (GEMM) operations in memory, the dominant computation in Machine Learning (ML) inference. However, re-purposing memory for compute poses key questions on 1) What type of CiM to use: Given a multitude of analog and digital CiMs, determining their suitability from systems perspective is needed. 2) When to use CiM: ML inference includes workloads with a variety of memory and compute requirements, making it difficult to identify when CiM is more beneficial than standard processing cores. 3) Where to integrate CiM: Each memory level has different bandwidth and capacity, that affects the data movement and locality benefits of CiM integration.

In this paper, we explore answers to these questions regarding CiM integration for ML inference acceleration. We use Timeloop-Accelergy for early system-level evaluation of CiM prototypes, including both analog and digital primitives. We integrate CiM into different cache memory levels in an Nvidia A100-like baseline architecture and tailor the dataflow for various ML workloads. Our experiments show CiM architectures improve energy efficiency, achieving up to 0.12x lower energy than the established baseline with INT-8 precision, and upto 4x performance gains with weight interleaving and duplication. The proposed work provides insights into what type of CiM to use, and when and where to optimally integrate it in the cache hierarchy for GEMM acceleration.”

Encuentra los documento técnico aquí. Publicado en diciembre de 2023 (preimpresión).

Sharma, Tanvi, Mustafa Ali, Indranil Chakraborty, and Kaushik Roy. “WWW: What, When, Where to Compute-in-Memory.” arXiv preprint arXiv:2312.15896 (2023).

Lectura relacionada

Aumento de la eficiencia energética de la IA con computación en memoria

Cómo procesar cargas de trabajo en escala zetta y mantenerse dentro de un presupuesto de energía fijo.

Modelado de computación en memoria con eficiencia biológica

La IA generativa obliga a los fabricantes de chips a utilizar los recursos informáticos de forma más inteligente.

SRAM In AI: The Future Of Memory

Why SRAM is viewed as a critical element in new and traditional compute architectures.

- Distribución de relaciones públicas y contenido potenciado por SEO. Consiga amplificado hoy.

- PlatoData.Network Vertical Generativo Ai. Empodérate. Accede Aquí.

- PlatoAiStream. Inteligencia Web3. Conocimiento amplificado. Accede Aquí.

- PlatoESG. Carbón, tecnología limpia, Energía, Ambiente, Solar, Gestión de residuos. Accede Aquí.

- PlatoSalud. Inteligencia en Biotecnología y Ensayos Clínicos. Accede Aquí.

- Fuente: https://semiengineering.com/cim-integration-for-ml-inference-acceleration/

- :posee

- :es

- :dónde

- $ UP

- 1

- 2023

- a

- aceleración

- el logro de

- AI

- aliviar

- an

- y

- respuestas

- arquitectura

- AS

- At

- Ancho de banda

- Base

- beneficioso

- beneficios

- ambas

- presupuesto

- by

- cache

- PUEDEN

- Capacidad

- irresistible

- cálculo

- Calcular

- Precio

- crítico

- datos

- Diciembre

- determinar

- una experiencia diferente

- difícil

- digital

- dominante

- cada una

- Temprano en la

- eficiencia

- elementos

- surgido

- energía

- eficiencia energética

- se establece

- evaluación

- experimentos

- explorar

- fijas

- Fuerzas

- Desde

- futuras

- Ganancias

- General

- dado

- esta página

- jerarquía

- Alta

- Sin embargo

- HTTPS

- Identifique

- mejorar

- in

- incluye

- Incluye

- Insights

- integrar

- integración

- dentro

- IT

- jpg

- Clave

- aprendizaje

- Nivel

- inferior

- máquina

- máquina de aprendizaje

- Máquinas

- Realizar

- macizamente

- Matrix

- Salud Cerebral

- ML

- más,

- movimiento

- multitud

- Nuevo

- Nvidia

- of

- on

- habiertos

- Operaciones

- nuestros

- Papel

- Paralelo

- realizar

- actuación

- la perspectiva

- Platón

- Inteligencia de datos de Platón

- PlatónDatos

- plantea

- industria

- Precisión

- tratamiento

- propuesto

- prototipos

- proporciona un

- publicado

- Preguntas

- con respecto a

- Requisitos

- investigadores

- Recursos

- roy

- Mostrar

- a medida

- estándar

- quedarse

- idoneidad

- Todas las funciones a su disposición

- Técnico

- que

- esa

- La

- El futuro de las

- su

- Estas

- así

- titulada

- a

- tradicional

- tipo

- universidad

- utilizan el

- variedad

- diversos

- visto

- de

- fue

- we

- peso

- ¿

- cuando

- dentro de

- Actividades:

- zephyrnet