This is a guest blog post co-written with Patrick Oberherr from Contentful and Johannes Günther from Netlight Consulting.

This blog post shows how to improve security in a data pipeline architecture based on Fluxuri de lucru gestionate de Amazon pentru Apache Airflow (Amazon MWAA) și Serviciul Amazon Elastic Kubernetes (Amazon EKS) by setting up fine-grained permissions, using HashiCorp Terraform for infrastructure as code.

Many AWS customers use Amazon EKS to execute their data workloads. The advantages of Amazon EKS include different compute and storage options depending on workload needs, higher resource utilization by sharing underlying infrastructure, and a vibrant open-source community that provides purpose-built extensions. The Date despre EKS project provides a series of templates and other resources to help customers get started on this journey. It includes a description of using Amazon MWAA as a job scheduler.

Conținut is an AWS customer and AWS Partner Network (APN) partner. Behind the scenes of their Software-as-a-Service (SaaS) product, the Contentful Composable Content Platform, Contentful uses insights from data to improve business decision-making and customer experience. Contentful engaged Netlight, an APN consulting partner, to help set up a data platform to gather these insights.

Most of Contentful’s application workloads run on Amazon EKS, and knowledge of this service and Kubernetes is widespread in the organization. That’s why Contentful’s data engineering team decided to run data pipelines on Amazon EKS as well. For job programare, they started with a self-operated Apache Airflow on an Amazon EKS cluster and later switched to Amazon MWAA to reduce engineering and operations overhead. The job execuție remained on Amazon EKS.

Contentful runs a complex data pipeline using this infrastructure, including ingestion from multiple data sources and different transformation jobs, for example using DBT. The whole pipeline shares a single Amazon MWAA environment and a single Amazon EKS cluster. With a diverse set of workloads in a single environment, it is necessary to apply the principiul celui mai mic privilegiu, ensuring that individual tasks or components have only the specific permissions they need to function.

By segmenting permissions according to roles and responsibilities, Contentful’s data engineering team was able to create a more robust and secure data processing environment, which is essential for maintaining the integrity and confidentiality of the data being handled.

In this blog post, we walk through setting up the infrastructure from scratch and deploying a sample application using Terraform, Contentful’s tool of choice for infrastructure as code.

Cerințe preliminare

To follow along this blog post, you need the latest version of the following tools installed:

Descriere

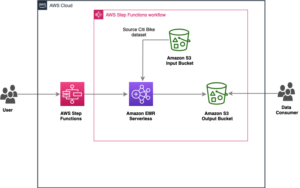

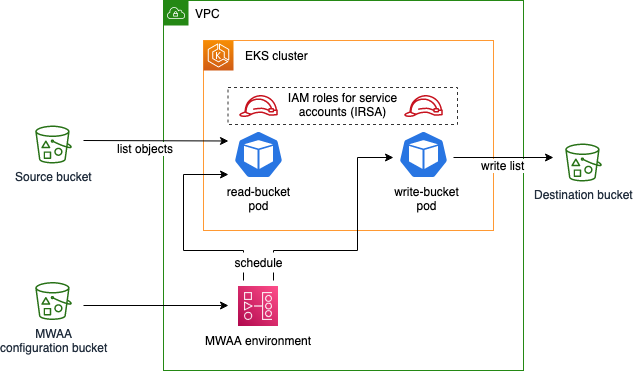

In this blog post, you will create a sample application with the following infrastructure:

The sample Airflow workflow lists objects in the source bucket, temporarily stores this list using Airflow XComs, and writes the list as a file to the destination bucket. This application is executed using Amazon EKS pods, scheduled by an Amazon MWAA environment. You deploy the EKS cluster and the MWAA environment into a cloud privat virtual (VPC) and apply least-privilege permissions to the EKS pods using Roluri IAM pentru conturile de serviciu. The configuration bucket for Amazon MWAA contains runtime requirements, as well as the application code specifying an Airflow Directed Acyclic Graph (DAG).

Initialize the project and create buckets

Creați un fișier main.tf with the following content in an empty directory:

This file defines the Terraform AWS provider as well as the source and destination bucket, whose names are exported as AWS Systems Manager parameters. It also tells Terraform to upload an empty object named dummy.txt into the source bucket, which enables the Airflow sample application we will create later to receive a result when listing bucket content.

Initialize the Terraform project and download the module dependencies by issuing the following command:

Create the infrastructure:

Terraform asks you to acknowledge changes to the environment and then starts deploying resources in AWS. Upon successful deployment, you should see the following success message:

Creați VPC

Creați un fișier nou vpc.tf in the same directory as main.tf și introduceți următoarele:

This file defines the VPC, a virtual network, that will later host the Amazon EKS cluster and the Amazon MWAA environment. Note that we use an existent Terraform modul for this, which wraps configuration of underlying network resources like subrețele, route tables, și Gateway-uri NAT.

Download the VPC module:

Deploy the new resources:

Note which resources are being created. By using the VPC module in our Terraform file, much of the underlying complexity is taken away when defining our infrastructure, but it’s still useful to know what exactly is being deployed.

Note that Terraform now handles resources we defined in both files, main.tf și vpc.tf, because Terraform includes all .tf fișiere în directorul de lucru curent.

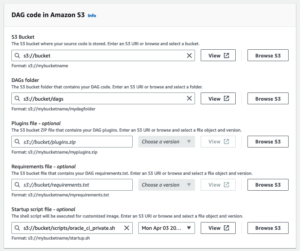

Create the Amazon MWAA environment

Creați un fișier nou mwaa.tf and insert the following content:

Like before, we use an existing module to save configuration effort for the Amazon MWAA environment. The module also creates the configuration bucket, which we use to specify the runtime dependency of the application (apache-airflow-cncf-kubernetes), În requirements.txt file. This package, in combination with the preinstalled package apache-airflow-amazon, enables interaction with Amazon EKS.

Download the MWAA module:

Deploy the new resources:

This operation takes 20–30 minutes to complete.

Create the Amazon EKS cluster

Creați un fișier eks.tf cu următorul conținut:

To create the cluster itself, we take advantage of the Planuri Amazon EKS pentru Terraform project. We also define a managed node group with one node as the target size. Note that in cases with fluctuating load, scaling your cluster with Karpenter instead of the managed node group approach shown above makes the cluster scale more flexibly. We used managed node groups primarily because of the ease of configuration.

We define the identity that the Amazon MWAA execution role assumes in Kubernetes using the map_roles variable. After configuring the Terraform Kubernetes provider, we give the Amazon MWAA execution role permissions to manage pods in the cluster.

Download the EKS Blueprints for Terraform module:

Deploy the new resources:

This operation takes about 12 minutes to complete.

Create IAM roles for service accounts

Creați un fișier roles.tf cu următorul conținut:

This file defines two Kubernetes service accounts, source-bucket-reader-sa și destination-bucket-writer-sa, and their permissions against the AWS API, using IAM roles for service accounts (IRSA). Again, we use a module from the Amazon EKS Blueprints for Terraform project to simplify IRSA configuration. Note that both roles only get the minimum permissions that they need, defined using AWS IAM policies.

Download the new module:

Deploy the new resources:

Creați DAG

Creați un fișier dag.py defining the Airflow DAG:

The DAG is defined to run on an hourly schedule, with two tasks read_bucket with service account source-bucket-reader-sa și write_bucket with service account destination-bucket-writer-sa, running after one another. Both are run using the EksPodOperator, which is responsible for scheduling the tasks on Amazon EKS, using the AWS CLI Docker image to run commands. The first task lists files in the source bucket and writes the list to Airflow XCom. The second task reads the list from XCom and stores it in the destination bucket. Note that the service_account_name parameter differentiates what each task is permitted to do.

Creați un fișier dag.tf to upload the DAG code to the Amazon MWAA configuration bucket:

Deploy the changes:

The Amazon MWAA environment automatically imports the file from the S3 bucket.

Rulați DAG

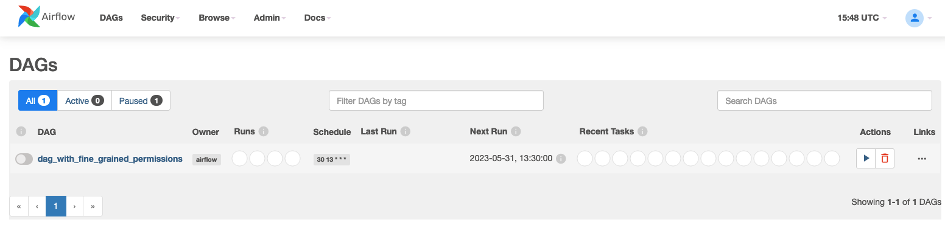

In your browser, navigate to the Amazon MWAA console and select your environment. In the top right-hand corner, select Deschideți interfața de utilizare a fluxului de aer . You should see the following:

To trigger the DAG, in the Acţiuni column, select the play symbol and then select Declanșează DAG. Click on the DAG name to explore the DAG run and its results.

Navigați către Consola Amazon S3 and choose the bucket starting with “destination”. It should contain a file list.json recently created by the write_bucket task. Download the file to explore its content, a JSON list with a single entry.

A curăța

The resources you created in this walkthrough incur AWS costs. To delete the created resources, issue the following command:

And approve the changes in the Terraform CLI dialog.

Concluzie

In this blog post, you learned how to improve the security of your data pipeline running on Amazon MWAA and Amazon EKS by narrowing the permissions of each individual task.

To dive deeper, use the working example created in this walkthrough to explore the topic further: What happens if you remove the service_account_name parameter from an Airflow task? What happens if you exchange the service account names in the two tasks?

For simplicity, in this walkthrough we used a flat file structure with Terraform and Python files inside a single directory. We did not adhere to the standard module structure proposed by Terraform, which is generally recommended. In a real-life project, splitting up the project into multiple Terraform projects or modules may also increase flexibility, speed, and independence between teams owning different parts of the infrastructure.

Lastly, make sure to study the Date despre EKS documentation, which provides other valuable resources for running your data pipeline on Amazon EKS, as well as the Amazon MWAA și Flux de aer Apache documentation for implementing your own use cases. Specifically, have a look at this sample implementation of a Terraform module for Amazon MWAA and Amazon EKS, which contains a more mature approach to Amazon EKS configuration and node automatic scaling, as well as networking.

If you have any questions, you can start a new thread on AWS re:Post sau întindeți mâna pe Asistență AWS.

Despre Autori

Ulrich Hinze este arhitect de soluții la AWS. El colaborează cu companii de software pentru a proiecta și implementa soluții bazate pe cloud pe AWS. Înainte de a se alătura AWS, a lucrat pentru clienți și parteneri AWS în roluri de inginerie software, consultanță și arhitectură timp de peste 8 ani.

Ulrich Hinze este arhitect de soluții la AWS. El colaborează cu companii de software pentru a proiecta și implementa soluții bazate pe cloud pe AWS. Înainte de a se alătura AWS, a lucrat pentru clienți și parteneri AWS în roluri de inginerie software, consultanță și arhitectură timp de peste 8 ani.

Patrick Oberherr is a Staff Data Engineer at Contentful with 4+ years of working with AWS and 10+ years in the Data field. At Contentful he is responsible for infrastructure and operations of the data stack which is hosted on AWS.

Patrick Oberherr is a Staff Data Engineer at Contentful with 4+ years of working with AWS and 10+ years in the Data field. At Contentful he is responsible for infrastructure and operations of the data stack which is hosted on AWS.

Johannes Günther is a cloud & data consultant at Netlight with 5+ years of working with AWS. He has helped clients across various industries designing sustainable cloud platforms and is AWS certified.

Johannes Günther is a cloud & data consultant at Netlight with 5+ years of working with AWS. He has helped clients across various industries designing sustainable cloud platforms and is AWS certified.

- Distribuție de conținut bazat pe SEO și PR. Amplifică-te astăzi.

- PlatoData.Network Vertical Generative Ai. Împuterniciți-vă. Accesați Aici.

- PlatoAiStream. Web3 Intelligence. Cunoștințe amplificate. Accesați Aici.

- PlatoESG. carbon, CleanTech, Energie, Mediu inconjurator, Solar, Managementul deșeurilor. Accesați Aici.

- PlatoHealth. Biotehnologie și Inteligență pentru studii clinice. Accesați Aici.

- Sursa: https://aws.amazon.com/blogs/big-data/set-up-fine-grained-permissions-for-your-data-pipeline-using-mwaa-and-eks/

- :are

- :este

- :nu

- $UP

- 1

- 10

- 100

- 12

- 16

- 2023

- 27

- 41

- 8

- 9

- a

- Capabil

- Despre Noi

- mai sus

- Conform

- Cont

- Conturi

- recunoaște

- peste

- acțiuni

- aciclic

- adăugat

- adera

- Avantaj

- Avantajele

- După

- din nou

- împotriva

- TOATE

- de-a lungul

- de asemenea

- Amazon

- Amazon Web Services

- an

- și

- O alta

- Orice

- Apache

- api

- aplicație

- Aplică

- abordare

- aproba

- arhitectură

- SUNT

- AS

- presupune

- At

- autorizare

- Automat

- în mod automat

- disponibil

- departe

- AWS

- Certificat AWS

- Client AWS

- bazat

- deoarece

- înainte

- în spatele

- în spatele scenelor

- fiind

- între

- Blog

- atât

- browser-ul

- afaceri

- dar

- by

- CAN

- cazuri

- Certificate

- si-a schimbat hainele;

- Modificări

- alegere

- Alege

- clic

- clientii

- Cloud

- Grup

- cod

- Coloană

- combinaţie

- comunitate

- Companii

- Completă

- complex

- complexitate

- componente

- Calcula

- confidențialitate

- Configuraţie

- Consoleze

- consultant

- consultant

- conţine

- conține

- conţinut

- platformă de conținut

- Colț

- corecta

- Cheltuieli

- crea

- a creat

- creează

- Curent

- client

- experienta clientului

- clienţii care

- DAG

- de date

- inginer de date

- Platforma de date

- de prelucrare a datelor

- datetime

- hotărât

- Luarea deciziilor

- Mai adânc

- defini

- definit

- defineste

- definire

- dependențe

- Dependenţă

- În funcție

- implementa

- dislocate

- Implementarea

- desfășurarea

- descriere

- proiect

- destinație

- distrus

- Dialog

- FĂCUT

- diferit

- dirijat

- scufunda

- diferit

- do

- Docher

- documentaţie

- Descarca

- desen

- fiecare

- uşura

- ecou

- efort

- gol

- permite

- angajat

- inginer

- Inginerie

- asigurare

- intrare

- Mediu inconjurator

- esenţial

- Eter (ETH)

- exact

- exemplu

- schimb

- a executa

- executat

- execuție

- experienţă

- explora

- extensii

- fals

- camp

- Fișier

- Fişiere

- First

- plat

- Flexibilitate

- în mod flexibil

- urma

- următor

- Pentru

- din

- funcţie

- mai mult

- aduna

- în general

- obține

- GitHub

- Da

- grafic

- grup

- Grupului

- Oaspete

- Blog de invitați

- Mânere

- se întâmplă

- Avea

- he

- ajutor

- a ajutat

- superior

- gazdă

- găzduit

- Cum

- Cum Pentru a

- HTML

- HTTPS

- IAM

- Identitate

- if

- punerea în aplicare a

- Punere în aplicare a

- import

- importurile

- îmbunătăţi

- in

- include

- include

- Inclusiv

- Crește

- independenţă

- individ

- industrii

- Infrastructură

- în interiorul

- perspective

- in schimb

- integritate

- interacţiune

- interfaţă

- în

- problema

- emitent

- IT

- ESTE

- în sine

- Loc de munca

- Locuri de munca

- aderarea

- călătorie

- jpg

- JSON

- Cheie

- Copil

- Cunoaște

- cunoştinţe

- Kubernetes

- mai tarziu

- Ultimele

- învățat

- cel mai puțin

- ca

- Listă

- listare

- liste

- încărca

- local

- autentificat

- Uite

- mentine

- face

- FACE

- administra

- gestionate

- manager

- matur

- Mai..

- mesaj

- Metadata

- minim

- minute

- modul

- Module

- mai mult

- mult

- multiplu

- nume

- Numit

- nume

- Navigaţi

- necesar

- Nevoie

- nevoilor

- reţea

- rețele

- Nou

- nod

- nota

- acum

- obiect

- obiecte

- of

- on

- ONE

- afară

- open-source

- operaţie

- Operațiuni

- Operatorii

- Opţiuni

- or

- organizație

- Altele

- al nostru

- afară

- producție

- propriu

- pachet

- parametru

- partener

- rețeaua partenerilor

- parteneri

- piese

- Plasture

- cale

- patrick

- permisiuni

- conducte

- platformă

- Platforme

- Plato

- Informații despre date Platon

- PlatoData

- Joaca

- păstaie

- Politica

- portret

- Post

- în primul rând

- privat

- prelucrare

- Produs

- Profil

- proiect

- Proiecte

- propus

- furnizorul

- furnizori

- furnizează

- Piton

- Întrebări

- RE

- ajunge

- a primi

- recent

- recomandat

- reduce

- regiune

- scoate

- Cerinţe

- resursă

- utilizarea resurselor

- Resurse

- responsabilităţi

- responsabil

- rezultat

- REZULTATE

- robust

- Rol

- rolurile

- Regula

- Alerga

- funcţionare

- ruleaza

- SaaS

- acelaşi

- Economisiți

- Scară

- scalare

- scene

- programa

- programată

- programare

- zgâria

- Al doilea

- sigur

- securitate

- vedea

- serie

- serviciu

- Servicii

- set

- instalare

- Acțiuni

- partajarea

- să

- indicat

- Emisiuni

- simplitate

- simplifica

- singur

- mediu unic

- Mărimea

- mic

- Software

- Inginerie software

- soluţii

- Sursă

- Surse

- specific

- specific

- viteză

- stivui

- Personal

- Începe

- început

- Pornire

- începe

- Declarație

- Încă

- depozitare

- opțiuni de stocare

- magazine

- structura

- Studiu

- subiect

- succes

- de succes

- sigur

- durabilă

- comutate

- simbol

- sisteme

- Lua

- luate

- ia

- Ţintă

- Sarcină

- sarcini

- echipă

- echipe

- spune

- şabloane

- Terraform

- a) Sport and Nutrition Awareness Day in Manasia Around XNUMX people from the rural commune Manasia have participated in a sports and healthy nutrition oriented activity in one of the community’s sports ready yards. This activity was meant to gather, mainly, middle-aged people from a Romanian rural community and teach them about the benefits that sports have on both their mental and physical health and on how sporting activities can be used to bring people from a community closer together. Three trainers were made available for this event, so that the participants would get the best possible experience physically and so that they could have the best access possible to correct information and good sports/nutrition practices. b) Sports Awareness Day in Poiana Țapului A group of young participants have taken part in sporting activities meant to teach them about sporting conduct, fairplay, and safe physical activities. The day culminated with a football match.

- acea

- Sursa

- lor

- apoi

- Acestea

- ei

- acest

- Prin

- la

- semn

- instrument

- Unelte

- top

- subiect

- Transformare

- declanşa

- adevărat

- Două

- tip

- care stau la baza

- Actualizează

- pe

- utilizare

- utilizat

- Utilizator

- User Interface

- utilizări

- folosind

- Valoros

- valoare

- variabil

- diverse

- versiune

- vibrant

- Virtual

- umbla

- walkthrough

- a fost

- we

- web

- servicii web

- BINE

- Ce

- cand

- care

- întreg

- a caror

- de ce

- pe scară largă

- voi

- cu

- a lucrat

- flux de lucru

- fluxuri de lucru

- de lucru

- ani

- tu

- Ta

- zephyrnet