A technical paper titled “WWW: What, When, Where to Compute-in-Memory” was published by researchers at Purdue University.

요약 :

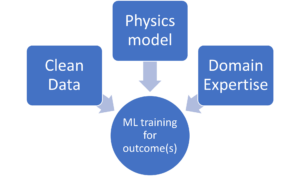

“Compute-in-memory (CiM) has emerged as a compelling solution to alleviate high data movement costs in von Neumann machines. CiM can perform massively parallel general matrix multiplication (GEMM) operations in memory, the dominant computation in Machine Learning (ML) inference. However, re-purposing memory for compute poses key questions on 1) What type of CiM to use: Given a multitude of analog and digital CiMs, determining their suitability from systems perspective is needed. 2) When to use CiM: ML inference includes workloads with a variety of memory and compute requirements, making it difficult to identify when CiM is more beneficial than standard processing cores. 3) Where to integrate CiM: Each memory level has different bandwidth and capacity, that affects the data movement and locality benefits of CiM integration.

In this paper, we explore answers to these questions regarding CiM integration for ML inference acceleration. We use Timeloop-Accelergy for early system-level evaluation of CiM prototypes, including both analog and digital primitives. We integrate CiM into different cache memory levels in an Nvidia A100-like baseline architecture and tailor the dataflow for various ML workloads. Our experiments show CiM architectures improve energy efficiency, achieving up to 0.12x lower energy than the established baseline with INT-8 precision, and upto 4x performance gains with weight interleaving and duplication. The proposed work provides insights into what type of CiM to use, and when and where to optimally integrate it in the cache hierarchy for GEMM acceleration.”

찾기 여기에 기술 문서가 있습니다. 2023년 XNUMX월 출판(사전 인쇄).

Sharma, Tanvi, Mustafa Ali, Indranil Chakraborty, and Kaushik Roy. “WWW: What, When, Where to Compute-in-Memory.” arXiv preprint arXiv:2312.15896 (2023).

관련 독서

메모리 내 컴퓨팅을 통해 AI 에너지 효율성 향상

제타스케일 워크로드를 처리하고 고정 전력 예산 내에서 유지하는 방법

생물학적 효율성을 갖춘 메모리 내 컴퓨팅 모델링

Generative AI는 칩 제조업체가 컴퓨팅 리소스를 보다 지능적으로 사용하도록 합니다.

SRAM In AI: The Future Of Memory

Why SRAM is viewed as a critical element in new and traditional compute architectures.

- SEO 기반 콘텐츠 및 PR 배포. 오늘 증폭하십시오.

- PlatoData.Network 수직 생성 Ai. 자신에게 권한을 부여하십시오. 여기에서 액세스하십시오.

- PlatoAiStream. 웹3 인텔리전스. 지식 증폭. 여기에서 액세스하십시오.

- 플라톤ESG. 탄소, 클린테크, 에너지, 환경, 태양광, 폐기물 관리. 여기에서 액세스하십시오.

- PlatoHealth. 생명 공학 및 임상 시험 인텔리전스. 여기에서 액세스하십시오.

- 출처: https://semiengineering.com/cim-integration-for-ml-inference-acceleration/

- :있다

- :이다

- :어디

- $UP

- 1

- 2023

- a

- 가속

- 달성

- AI

- 덜다

- an

- 및

- 답변

- 아키텍처

- AS

- At

- 대역폭

- 기준

- 유익한

- 혜택

- 두

- 예산

- by

- 캐시

- CAN

- 생산 능력

- 강요하는

- 계산

- 계산

- 비용

- 임계

- 데이터

- XNUMX월

- 결정

- 다른

- 어려운

- 디지털

- 지배적 인

- 마다

- 초기의

- 효율성

- 요소

- 등장

- 에너지

- 에너지 효율

- 확립 된

- 평가

- 실험

- 탐험

- 고정

- 럭셔리

- 군

- 에

- 미래

- 이익

- 일반

- 주어진

- 여기에서 지금 확인해 보세요.

- 계층

- 높은

- 그러나

- HTTPS

- 확인

- 개선

- in

- 포함

- 포함

- 통찰력

- 통합

- 완성

- 으로

- IT

- JPG

- 키

- 배우기

- 레벨

- 레벨

- 절감

- 기계

- 기계 학습

- 기계

- 유튜브 영상을 만드는 것은

- 거대한

- 매트릭스

- 메모리

- ML

- 배우기

- 운동

- 다수

- 필요

- 신제품

- 엔비디아

- of

- on

- 열 수

- 행정부

- 우리의

- 서

- 평행

- 수행

- 성능

- 관점

- 플라톤

- 플라톤 데이터 인텔리전스

- 플라토데이터

- 포즈

- 힘

- Precision

- 방법

- 처리

- 제안 된

- 프로토 타입

- 제공

- 출판

- 문의

- 에 관한

- 요구조건 니즈

- 연구원

- 제품 자료

- 로이

- 표시

- 해결책

- 표준

- 유지

- 적당

- 시스템은

- 테크니컬

- 보다

- 그

- XNUMXD덴탈의

- 미래

- 그들의

- Bowman의

- 이

- 제목의

- 에

- 전통적인

- 유형

- 대학

- 사용

- 종류

- 여러

- 열람 한

- 의

- 였다

- we

- 무게

- 뭐

- 언제

- 과

- 이내

- 작업

- 제퍼 넷