Slika avtorja

For parallel processing, we divide our task into sub-units. It increases the number of jobs processed by the program and reduces overall processing time.

For example, if you are working with a large CSV file and you want to modify a single column. We will feed the data as an array to the function, and it will parallel process multiple values at once based on the number of available delavci. These workers are based on the number of cores within your processor.

Opomba: using parallel processing on a smaller dataset will not improve processing time.

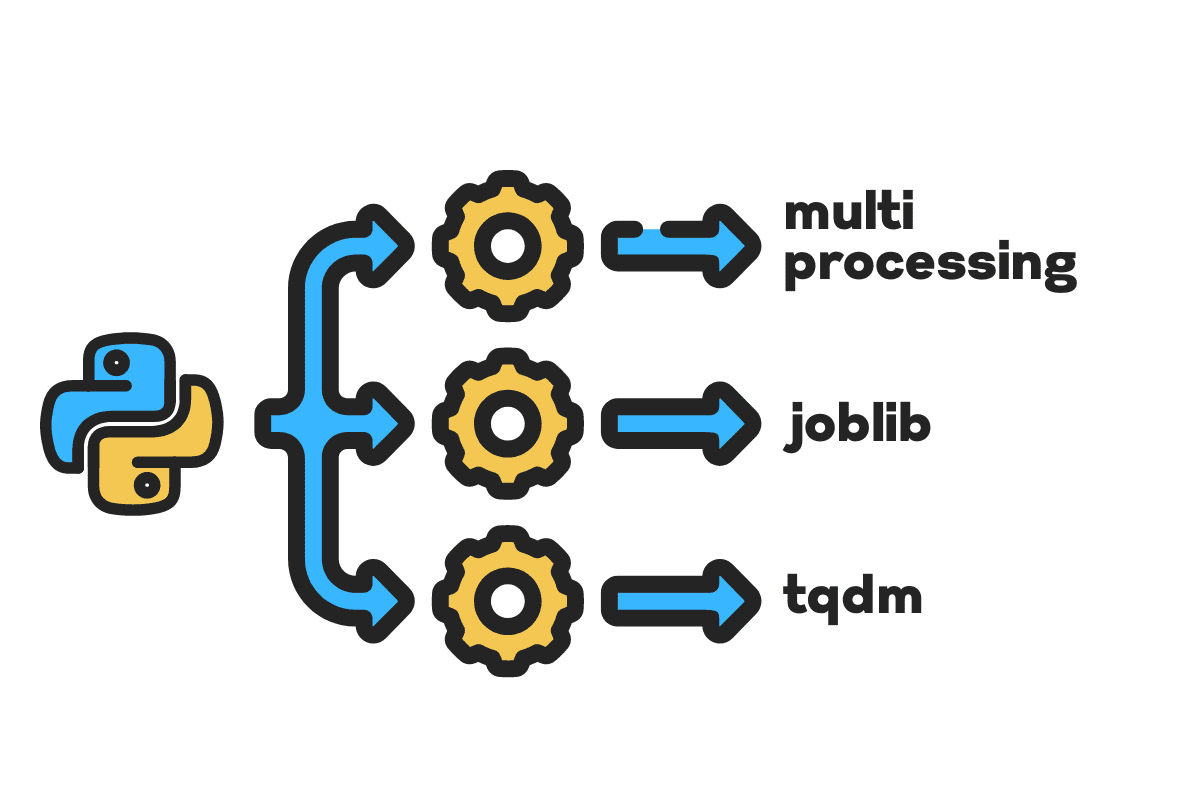

In this blog, we will learn how to reduce processing time on large files using večprocesiranje, joblibin tqdm Python packages. It is a simple tutorial that can apply to any file, database, image, video, and audio.

Opomba: we are using the Kaggle notebook for the experiments. The processing time can vary from machine to machine.

Uporabili bomo US Accidents (2016 – 2021) dataset from Kaggle which consists of 2.8 million records and 47 columns.

Uvozili bomo multiprocessing, joblibin tqdm za vzporedna obdelava, pandas za data ingestionsin re, nltkin string za obdelava besedila.

# Parallel Computing uvoz večprocesiranje as mp iz joblib uvoz Parallel, delayed iz tqdm.notebook uvoz tqdm # Data Ingestion uvoz pand as pd # Text Processing uvoz re iz nltk.corpus uvoz štoparice uvoz niz

Before we jump right in, let’s set n_workers by doubling cpu_count(). As you can see, we have 8 workers.

n_workers = 2 * mp.cpu_count() print(f"{n_workers} workers are available") >>> 8 workers are available

In the next step, we will ingest large CSV files using the pand read_csv function. Then, print out the shape of the dataframe, the name of the columns, and the processing time.

Opomba: Jupyter’s magic function

%%timelahko prikaže CPU times in wall time at the end of the process.

%%time

file_name="../input/us-accidents/US_Accidents_Dec21_updated.csv"

df = pd.read_csv(file_name) print(f"Shape:{df.shape}nnColumn Names:n{df.columns}n")

izhod

Shape:(2845342, 47) Column Names: Index(['ID', 'Severity', 'Start_Time', 'End_Time', 'Start_Lat', 'Start_Lng', 'End_Lat', 'End_Lng', 'Distance(mi)', 'Description', 'Number', 'Street', 'Side', 'City', 'County', 'State', 'Zipcode', 'Country', 'Timezone', 'Airport_Code', 'Weather_Timestamp', 'Temperature(F)', 'Wind_Chill(F)', 'Humidity(%)', 'Pressure(in)', 'Visibility(mi)', 'Wind_Direction', 'Wind_Speed(mph)', 'Precipitation(in)', 'Weather_Condition', 'Amenity', 'Bump', 'Crossing', 'Give_Way', 'Junction', 'No_Exit', 'Railway', 'Roundabout', 'Station', 'Stop', 'Traffic_Calming', 'Traffic_Signal', 'Turning_Loop', 'Sunrise_Sunset', 'Civil_Twilight', 'Nautical_Twilight', 'Astronomical_Twilight'], dtype='object') CPU times: user 33.9 s, sys: 3.93 s, total: 37.9 s Wall time: 46.9 s

O clean_text is a straightforward function for processing and cleaning the text. We will get English štoparice uporabo nltk.copus the use it to filter out stop words from the text line. After that, we will remove special characters and extra spaces from the sentence. It will be the baseline function to determine processing time for serijska, vzporednoin serija obravnavati.

def čisto_besedilo(text): # Remove stop words stops = stopwords.words("english") text = " ".join([word za beseda in text.split() if beseda ne in stops]) # Remove Special Characters text = text.translate(str.maketrans('', '', string.punctuation)) # removing the extra spaces text = re.sub(' +',' ', text) vrnitev besedilo

For serial processing, we can use the pandas .apply() function, but if you want to see the progress bar, you need to activate tqdm za pand in nato uporabite .progress_apply() Funkcija.

We are going to process the 2.8 million records and save the result back to the “Description” column column.

%%time tqdm.pandas() df['Description'] = df['Description'].progress_apply(clean_text)

izhod

It took 9 minutes and 5 seconds for the high-end processor to serial process 2.8 million rows.

100% 2845342/2845342 [09:05<00:00, 5724.25it/s] CPU times: user 8min 14s, sys: 53.6 s, total: 9min 7s Wall time: 9min 5s

There are various ways to parallel process the file, and we are going to learn about all of them. The multiprocessing is a built-in python package that is commonly used for parallel processing large files.

We will create a multiprocessing Bazen z 8 delavci in uporabite map function to initiate the process. To display progress bars, we are using tqdm.

The map function consists of two sections. The first requires the function, and the second requires an argument or list of arguments.

Learn more by reading Dokumentacija.

%%time p = mp.Pool(n_workers) df['Description'] = p.map(clean_text,tqdm(df['Description']))

izhod

We have improved our processing time by almost 3X. The processing time dropped from 9 minut 5 sekund do 3 minut 51 sekund.

100% 2845342/2845342 [02:58<00:00, 135646.12it/s] CPU times: user 5.68 s, sys: 1.56 s, total: 7.23 s Wall time: 3min 51s

We will now learn about another Python package to perform parallel processing. In this section, we will use joblib’s vzporedno in zamudo to replicate the map Funkcija.

- The Parallel requires two arguments: n_jobs = 8 and backend = multiprocessing.

- Then, we will add čisto_besedilo k zamudo Funkcija.

- Create a loop to feed a single value at a time.

The process below is quite generic, and you can modify your function and array according to your needs. I have used it to process thousands of audio and video files without any issue.

Priporočamo: add exception handling using try: in except:

def text_parallel_clean(array): result = Parallel(n_jobs=n_workers,backend="multiprocessing")( delayed(clean_text) (text) za besedilo in tqdm(array) ) vrnitev povzroči

Add the “Description” column to text_parallel_clean().

%%time df['Description'] = text_parallel_clean(df['Description'])

izhod

It took our function 13 seconds more than multiprocessing the Bazen. Tudi takrat, vzporedno is 4 minutes and 59 seconds faster than serijska obravnavati.

100% 2845342/2845342 [04:03<00:00, 10514.98it/s] CPU times: user 44.2 s, sys: 2.92 s, total: 47.1 s Wall time: 4min 4s

There is a better way to process large files by splitting them into batches and processing them parallel. Let’s start by creating a batch function that will run a clean_function on a single batch of values.

Batch Processing Function

def proc_batch(batch): vrnitev [ clean_text(text) za besedilo in batch ]

Splitting the File into Batches

The function below will split the file into multiple batches based on the number of workers. In our case, we get 8 batches.

def batch_file(array,n_workers): file_len = len(array) batch_size = round(file_len / n_workers) batches = [ array[ix:ix+batch_size] za ix in tqdm(range(0, file_len, batch_size)) ] vrnitev batches batches = batch_file(df['Description'],n_workers) >>> 100% 8/8 [00:00<00:00, 280.01it/s]

Running Parallel Batch Processing

Finally, we will use vzporedno in zamudo to process batches.

Opomba: To get a single array of values, we have to run list comprehension as shown below.

%%time batch_output = Parallel(n_jobs=n_workers,backend="multiprocessing")( delayed(proc_batch) (batch) za serija in tqdm(batches) ) df['Description'] = [j za i in batch_output za j in i]

izhod

We have improved the processing time. This technique is famous for processing complex data and training deep learning models.

100% 8/8 [00:00<00:00, 2.19it/s] CPU times: user 3.39 s, sys: 1.42 s, total: 4.81 s Wall time: 3min 56s

tqdm takes multiprocessing to the next level. It is simple and powerful. I will recommend it to every data scientist.

Odjaviti Dokumentacija to learn more about multiprocessing.

O process_map zahteva:

- Ime funkcije

- Dataframe column

- max_workers

- chucksize is similar to batch size. We will calculate the batch size using the number of workers or you can add the number based on your preference.

%%čas iz tqdm.contrib.concurrent uvoz process_map batch = round(len(df)/n_workers) df["Description"] = process_map( clean_text, df["Description"], max_workers=n_workers, chunksize=batch )

izhod

With a single line of code, we get the best result.

100% 2845342/2845342 [03:48<00:00, 1426320.93it/s] CPU times: user 7.32 s, sys: 1.97 s, total: 9.29 s Wall time: 3min 51s

You need to find a balance and select the technique that works best for your case. It can be serial processing, parallel, or batch processing. The parallel processing can backfire if you are working with a smaller, less complex dataset.

In this mini-tutorial, we have learned about various Python packages and techniques that allow us to parallel process our data functions.

If you are only working with a tabular dataset and want to improve your processing performance, then I will suggest you try Armaturna plošča, podatkovna tabelain HITRI

Reference

Abid Ali Awan (@1abidaliawan) je certificiran strokovnjak za podatkovne znanstvenike, ki rad gradi modele strojnega učenja. Trenutno se osredotoča na ustvarjanje vsebin in pisanje tehničnih blogov o strojnem učenju in tehnologijah podatkovne znanosti. Abid ima magisterij iz tehnološkega managementa in diplomo iz telekomunikacijskega inženiringa. Njegova vizija je zgraditi izdelek AI z uporabo grafične nevronske mreže za študente, ki se borijo z duševnimi boleznimi.

- Distribucija vsebine in PR s pomočjo SEO. Okrepite se še danes.

- Platoblockchain. Web3 Metaverse Intelligence. Razširjeno znanje. Dostopite tukaj.

- vir: https://www.kdnuggets.com/2022/07/parallel-processing-large-file-python.html?utm_source=rss&utm_medium=rss&utm_campaign=parallel-processing-large-file-in-python

- 1

- 10

- 11

- 2016

- 2021

- 39

- 7

- 9

- a

- O meni

- nesreče

- Po

- po

- AI

- vsi

- in

- Še ena

- Uporabi

- Argument

- Argumenti

- Array

- audio

- Na voljo

- nazaj

- Backend

- Ravnovesje

- bar

- bari

- temeljijo

- Izhodišče

- spodaj

- BEST

- Boljše

- Blog

- blogi

- izgradnjo

- Building

- vgrajeno

- izračun

- primeru

- Certified

- znaki

- mesto

- čiščenje

- Koda

- Stolpec

- Stolpci

- pogosto

- kompleksna

- sočasno

- vsebina

- država

- občine

- CPU

- ustvarjajo

- Ustvarjanje

- Oblikovanje

- Trenutno

- datum

- znanost o podatkih

- podatkovni znanstvenik

- Baze podatkov

- globoko

- globoko učenje

- Stopnja

- Zamujena

- opis

- Ugotovite,

- zaslon

- podvojitev

- padla

- Inženiring

- Angleščina

- Eter (ETH)

- Tudi vsak

- Primer

- izjema

- dodatna

- slavni

- hitreje

- file

- datoteke

- filter

- Najdi

- prva

- osredotoča

- iz

- funkcija

- funkcije

- dobili

- GitHub

- dogaja

- graf

- Grafična nevronska mreža

- Ravnanje

- drži

- Kako

- Kako

- HTML

- HTTPS

- bolezen

- slika

- uvoz

- izboljšanje

- izboljšalo

- in

- Poveča

- sproži

- vprašanje

- IT

- Delovna mesta

- skoči

- KDnuggets

- velika

- UČITE

- naučili

- učenje

- Stopnja

- vrstica

- Seznam

- stroj

- strojno učenje

- magic

- upravljanje

- map

- mojster

- duševne

- Mentalna bolezen

- milijonov

- min

- modeli

- spremenite

- več

- več

- Ime

- Imena

- Nimate

- potrebe

- mreža

- Nevronski

- nevronska mreža

- Naslednja

- prenosnik

- Številka

- predmet

- Splošni

- paket

- pakete

- pand

- vzporedno

- opravlja

- performance

- platon

- Platonova podatkovna inteligenca

- PlatoData

- močan

- Tiskanje

- Postopek

- obravnavati

- Procesor

- Izdelek

- strokovni

- Program

- Napredek

- Python

- Železniški

- RE

- reading

- Priporočamo

- evidence

- zmanjša

- zmanjšuje

- odstrani

- odstranjevanje

- zahteva

- povzroči

- Run

- Shrani

- Znanost

- Znanstvenik

- drugi

- sekund

- Oddelek

- oddelki

- stavek

- serijska

- nastavite

- Oblikujte

- pokazale

- Podoben

- Enostavno

- sam

- Velikosti

- manj

- prostori

- posebna

- po delih

- Začetek

- Država

- postaja

- Korak

- stop

- Postanki

- naravnost

- ulica

- Boriti se

- Študenti

- meni

- Naloga

- tehnični

- tehnike

- Tehnologije

- Tehnologija

- telekomunikacije

- O

- tisoče

- čas

- krat

- Časovni pas

- do

- Skupaj za plačilo

- usposabljanje

- Navodila

- us

- uporaba

- uporabnik

- vrednost

- Vrednote

- različnih

- Video

- Vizija

- načini

- ki

- WHO

- bo

- v

- brez

- beseda

- besede

- delavci

- deluje

- deluje

- pisanje

- Vaša rutina za

- zefirnet