Jay Dawani is the co-founder and CEO at Lemurian Labs, a startup developing an accelerated computing platform tailored specifically for AI applications. The platform breaks through the hardware barriers to make AI development faster, cheaper, more sustainable, and accessible to more than just a few companies.

Prior to founding Lemurian, Jay founded two other companies in the AI space. He is also the author of the top-rated “Mathematics for Deep Learning.”

An expert across artificial intelligence, robotics and mathematics, Jay has served as the CTO of BlocPlay, a public company building a blockchain-based gaming platform, and served as Director of AI at GEC, where he led the development of several client projects covering areas from retail, algorithmic trading, protein folding, robots for space exploration, recommendation systems, and more. In his spare time, he has also been an advisor at NASA Frontier Development Lab, Spacebit and SiaClassic.

The last time we featured Lemurian Labs you were focused on robotics and edge AI. Now you’re focused on data center and cloud infrastructure. What happened that made you want to pivot?

Indeed, we did transition from focusing on building a high performance, low latency, system-on-chip for autonomous robotics applications that could accelerate the entire sense-plan-act loop to building a domain specific accelerator for AI focusing on datacenter-scale applications. But it wasn’t just an ordinary pivot; it was a clarion call we felt we had the responsibility to answer.

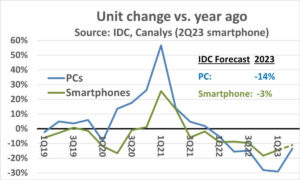

In 2018, we were working on training a $2.1 billion parameter model, but we abandoned the effort because the cost was so extraordinarily high that we couldn’t justify it. So imagine my surprise that GPT3, which OpenAI released as ChatGPT in November 2022, was a $175 billion parameter model. This model is more than 80X larger than what we were working on merely 4 years earlier, which is both exciting and frightening.

The cost of training such a model is staggering, to say the least. Based on current scaling trends, we can expect the cost of training a frontier AI model to exceed a billion dollars in the not too distant future. While the capabilities of these models will be astounding, the cost is ridiculously high. Based on this trajectory, only a handful of very well resourced companies with their own datacenters will be able to afford to train, deploy and fine-tune these models. This isn’t purely because compute is expensive and power hungry, but also because the software stacks we rely on were not built for this world.

Because of geographical and energy constraints, there are only so many places to build datacenters. To meet the compute demands of AI, we need to be able to build zettascale machines without requiring 20 nuclear reactors to power it. We need a more practical, scalable and economical solution. We looked around and didn’t see anyone on a path to solving this. And so, we went to the drawing board to look at the problem holistically as a system of systems and reason about a solution from first principles. We asked ourselves, how would we design the full stack, from software to hardware, if we had to economically serve 10 billion LLM queries a day. We’ve set our sights on a zettascale machine in under 200MW, by 2028.

The trick is to look at it from the point of view of incommensurate scaling – different parts of a system follow different scaling rules, so at some point things just stop working, start breaking or the cost benefit tradeoff no longer makes sense. When this happens, the only option is to redesign the system. Our assessment and solution encompasses the workload, number system, programming model, compiler, runtime and hardware holistically.

Thankfully, our existing investors and the rest of the market see the vision, and we raised a $9M seed round to develop our number format – PAL, to explore the design space and converge on an architecture for our domain specific accelerator, and architect our compiler and runtime. In simulations, we’ve been able to achieve a 20X throughput gain in the smaller energy footprint than modern GPUs, and are projecting to be able to deliver an 8X benefit in system performance for total cost of ownership on the same transistor technology.

Needless to say, we’ve got a lot of work ahead of us, but we’re pretty excited about the prospect of being able to redefine datacenter economics to ensure a future where AI is abundantly available to everyone.

That certainly sounds exciting and those numbers sound impressive. But you have mentioned number systems, hardware, compilers and runtimes as all the things you’re focused on – it sounds like a lot for any company to take on at once. It seems like a very risky proposition. Aren’t startups supposed to be more focused?

It does sound like a lot of different efforts, but it is, in fact, one effort with a lot of interconnected parts. Solving only one of these components in isolation of the others will only hinder the potential for innovation because it results in overlooking the systemic inefficiencies and bottlenecks. Jensen Huang said it best, “In order to be an accelerated computing company, you have to be a full stack company”, and I fully agree. They are the current market leader for a reason. But I would challenge the notion that we are not focused. It is in how we think about the problem holistically and how to best solve it for our customers, is where our focus is.

Doing that requires a multidisciplinary approach like ours. Each part of our work informs and supports the others, enabling us to create a solution that is far more than the sum of its parts. Imagine if you had to build a racecar. You wouldn’t arbitrarily pick a chassis, add racing tires and drop in the most powerful engine you can find and race it, right? You would think about the aerodynamicity of the car’s body to reduce drag and enhance downforce, optimize the weight distribution for good handling, custom design the engine for maximum performance, get a cooling system to prevent overheating, spec a roll cage to keep the driver safe, etc. Each one of these elements builds upon and informs the other.

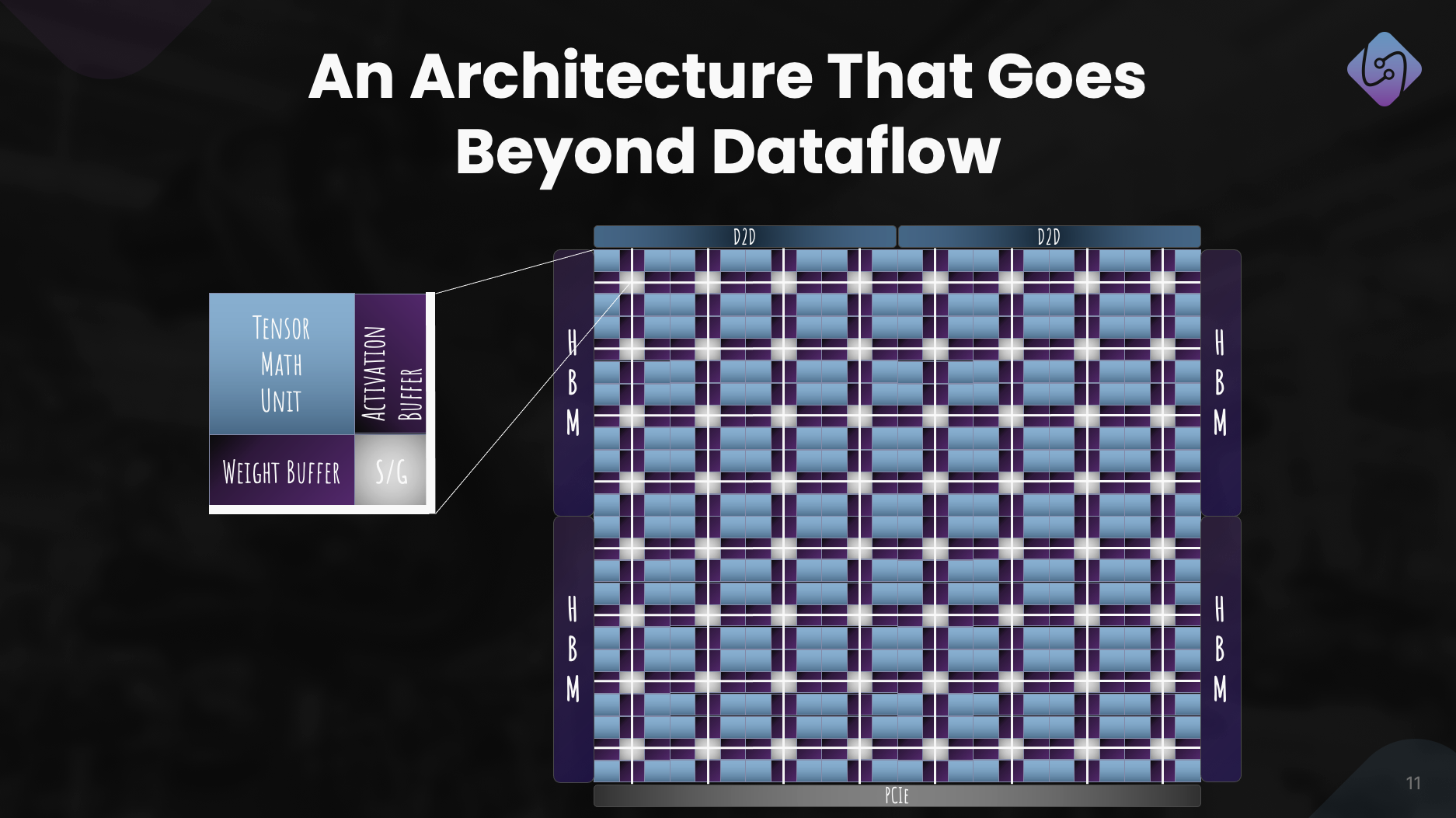

That said, it is risky to try and do all of it at once for any company in any industry. To manage the risks we are taking a phased approach, allowing us to validate our technology with customers and adjust our strategy as needed. We have proven our number format works and that it has better power-performance-area than equivalent floating point types, while also having better numerical properties which make it easier to quantize neural networks to smaller bit-widths. We have designed an architecture which we feel confident in, and it is suitable for both training and inference. But more important than all of that is getting the software right, and that is the bulk of our immediate focus. We need to ensure we make the right decisions in our software stack for where we see the world being a year or two or more from today.

Building a hardware company is tough, expensive and takes a long time. The focus on software first sounds like a very viable business on its own, and potentially more appealing to investors in the current climate. Why are you also doing hardware given so many well-funded companies in the space are closing their doors, struggling to get adoption with customers and larger players are building their own hardware?

You’re absolutely correct that software businesses have generally been able to raise capital much more easily than hardware companies, and that hardware is very tough. Our current focus is very much on software because that’s where we see the bigger problem. Let me be clear, the problem isn’t whether I can get kernels running on a CPU or GPU with high performance; that’s a long solved problem. The problem of today is how do we make it easier for developers to get more performance, productively out of several thousand node clusters made up of heterogeneous compute without asking them to overhaul their workflow.

That’s the problem we’re currently focused on solving with a software stack that gives developers superpowers and unlocks the full capability of warehouse scale computers, so we can more economically train and deploy AI models.

Now, regarding investment, yes, VCs are being more selective in the kind of companies they back, but it also means VCs are looking for companies with the potential to offer truly groundbreaking products that have a clear path to commercialization while having significant impact. We’ve learned from the challenges and mistakes of others and have actively designed our business model and roadmap to address the risks. It’s also important to take note that what’s made startups successful has rarely been how easily they can raise VC funding, but has more to do with their resourcefulness, stubbornness and customer focus.

And before you ask, we are still working on hardware, but primarily in simulation right now. We don’t intend to tape out for a while. But we can save that conversation for another time.

That is certainly compelling and your phased approach is very different compared with what we’ve seen other hardware companies do. I understand the problem you’re saying your software stack will address, but how does your software differentiate from the various efforts in the market?

Most of the companies you’re referring to are focusing on making it easier to program GPUs by introducing tile-based or task-mapping programming models to get more performance out of GPUs, or building new programming languages to get high performance kernels scheduled on different platforms with support for in-line assembly. Those are important problems that they’re addressing, but we see the problem we’re solving as almost orthogonal.

Let’s for a moment think about the cadence of hardware and software transitions. Single-core architectures gained performance from clock speed and transistor density, but eventually clock speeds hit a plateau. Parallelism using many cores circumvented this and provided sizable speedups. It took software roughly a decade to catch up, because programming models, compilers and runtimes had to be rethought to help developers extract the value in this paradigm. Then, GPUs started becoming general purpose accelerators, again with a different programming model. Again, it took almost a decade for developers to extract value here.

Again, hardware is hitting a plateau – Moore’s law, energy and thermal constraints, memory bottlenecks, and the diversity of workloads plus the need for exponentially more compute is pushing us towards building increasingly heterogeneous computer architectures for better performance, efficiency and total cost. This shift in hardware will of course create challenges for software because we don’t have the right compilers and runtimes to support the next evolution of computing. This time though, we shouldn’t have to wait another 10 years for software to extract the value of heterogeneous architectures or large clusters, especially when they are going more than 80% unutilized.

What we’re focusing on is building a heterogeneity-aware programming model with task-based parallelism, addressing portable performance with cross processor optimizations, context-aware compilation and dynamic resource allocation. And for us, it doesn’t matter whether it’s a CPU, GPU, TPU, SPU (Lemurian’s architecture) or a mesh of all of them. I know that sounds like a lot of fancy words, but what it’s really saying is that we’ve made it possible to program any kind of processor with a single approach, and we can port code from one kind of processor over to another with minimal effort without needing to sacrifice performance, and schedule work adaptively and dynamically across nodes.

If what you say is true you may just completely redefine computing. Let’s talk about funding. You raised $9 million in seed funding last year which signifies strong investor support and belief in your vision. What have you done since?

Over the past year, fueled by the seed funding, we have made significant strides. With our team now at 20 members, we meticulously addressed challenges, engaged with customers and refined our approach.

We focused on enhancing PAL for training and inference, explored computer architecture for our accelerator and developed a simulator for performance metrics. Simultaneously, we reimagined our software stack for datacenter applications, emphasizing heterogeneous computing.

This effort resulted in a well-defined architecture, showcasing PAL’s efficacy for AI at scale. Beyond tech advancements, we pursued collaborations and outreach to democratize access. These efforts position Lemurian Labs to address immediate customer challenges, gearing up for the release of our production silicon.

What are Lemurian Labs’ medium-term plans regarding software stack development, collaborations, and the improvement of the accelerator’s architecture?

Our immediate goal is to create a software stack that targets CPUs, GPUs and our AI accelerators with portable performance, which will be made available to early partners at the end of the year. We’re currently in conversations with most of the leading semiconductor companies, cloud service providers, hyperscalers and AI companies to give them access to our compiler and runtime. In parallel, we continue to work on and improve our accelerator’s architecture for a truly co-designed system of hardware and software. And of course, we have just started raising our series A with very strong interest from the investor community, which will enable us to grow our team and meet our target for software product delivery at the end of the year.

In closing, how do you see Lemurian Labs contributing to changing the landscape of AI development, accessibility and equity in the coming years?

We didn’t set out to redefine computing only for commercial gain or for the fun of it. As Lemurians, our driving force is that we believe in the transformative potential of AI and that more than just a few companies should have the resources to define the future of this technology and how we use it. We also don’t find it acceptable that the datacenter infrastructure for AI is on track to consume as much as 20% of the world’s energy by 2030. We all came together because we believe there is a better path forward for society if we can make AI more accessible by dramatically lowering its associated cost, accelerate the pace of innovation in AI and broaden its impact. By addressing the challenges of current hardware infrastructure, we seek to pave the path to empowering a billion people with the capabilities of AI, ensuring equitable distribution of this advanced technology. We hope our commitment to product-focused solutions, collaboration and continuous innovation positions us as a driving force in shaping the future of AI development to be a positive one.

Also Read:

Share this post via:- SEO Powered Content & PR Distribution. Get Amplified Today.

- PlatoData.Network Vertical Generative Ai. Empower Yourself. Access Here.

- PlatoAiStream. Web3 Intelligence. Knowledge Amplified. Access Here.

- PlatoESG. Carbon, CleanTech, Energy, Environment, Solar, Waste Management. Access Here.

- PlatoHealth. Biotech and Clinical Trials Intelligence. Access Here.

- Source: https://semiwiki.com/ceo-interviews/341485-ceo-interview-jay-dawani-of-lemurian-labs-2/

- :has

- :is

- :not

- :where

- $9 million

- $UP

- 1

- 10

- 20

- 2018

- 2022

- 2028

- 2030

- 20x

- 300

- 4

- a

- Able

- About

- absolutely

- accelerate

- accelerated

- accelerator

- accelerators

- acceptable

- access

- accessibility

- accessible

- Achieve

- across

- actively

- add

- address

- addressed

- addressing

- adjust

- Adoption

- advanced

- Advanced Technology

- advancements

- advisor

- again

- ahead

- AI

- AI models

- algorithmic

- algorithmic trading

- All

- allocation

- Allowing

- almost

- also

- Amazon

- an

- and

- Another

- answer

- any

- anyone

- appealing

- applications

- approach

- arbitrarily

- architecture

- architectures

- ARE

- areas

- around

- artificial

- artificial intelligence

- AS

- ask

- asking

- Assembly

- assessment

- associated

- astounding

- At

- author

- autonomous

- available

- back

- barriers

- based

- BE

- because

- becoming

- been

- before

- being

- belief

- believe

- benefit

- BEST

- Better

- Beyond

- bigger

- Billion

- blockchain-based

- board

- body

- both

- bottlenecks

- Breaking

- breaks

- broaden

- build

- Building

- builds

- built

- bulk

- business

- business model

- businesses

- but

- by

- Cadence

- call

- came

- CAN

- Can Get

- capabilities

- capability

- capital

- Catch

- Center

- ceo

- CEO Interview

- certainly

- challenge

- challenges

- changing

- chassis

- ChatGPT

- cheaper

- clear

- client

- Climate

- Clock

- closing

- Cloud

- cloud infrastructure

- Co-founder

- code

- collaboration

- collaborations

- coming

- commercial

- commercialization

- commitment

- community

- Companies

- company

- compared

- compelling

- compiler

- completely

- components

- Compute

- computer

- computers

- computing

- confident

- constraints

- consume

- continue

- continuous

- contributing

- converge

- Conversation

- conversations

- cooling system

- correct

- Cost

- could

- course

- covering

- CPU

- create

- Cross

- CTO

- Current

- Currently

- custom

- custom design

- customer

- Customers

- data

- Data Center

- Datacenter

- day

- decade

- decisions

- deep

- define

- deliver

- delivery

- demands

- democratize

- density

- deploy

- Design

- designed

- develop

- developed

- developers

- developing

- Development

- DID

- different

- differentiate

- Director

- Distant

- distribution

- Diversity

- do

- does

- Doesn’t

- doing

- dollars

- domain

- done

- Dont

- doors

- dramatically

- drawing

- driver

- driving

- Drop

- dynamic

- dynamically

- each

- Earlier

- Early

- easier

- easily

- economically

- Economics

- Edge

- efficacy

- efficiency

- effort

- efforts

- elements

- emphasizing

- empowering

- enable

- enabling

- encompasses

- end

- energy

- engaged

- Engine

- enhance

- enhancing

- ensure

- ensuring

- Entire

- equitable

- equity

- Equivalent

- especially

- etc

- eventually

- everyone

- evolution

- exceed

- excited

- exciting

- existing

- expect

- expensive

- expert

- exploration

- explore

- Explored

- exponentially

- extract

- extraordinarily

- fact

- fancy

- far

- faster

- featured

- feel

- felt

- few

- Find

- First

- floating

- Focus

- focused

- focusing

- follow

- Footprint

- For

- Force

- format

- Forward

- Founded

- founding

- from

- Frontier

- fueled

- full

- Full Stack

- fully

- fun

- funding

- future

- Future of AI

- Gain

- gained

- gaming

- gaming platform

- gearing

- General

- generally

- geographical

- get

- getting

- Give

- given

- gives

- goal

- going

- good

- got

- GPU

- GPUs

- groundbreaking

- Grow

- had

- handful

- Handling

- happened

- happens

- Hardware

- Have

- having

- he

- help

- here

- High

- hinder

- his

- Hit

- hitting

- hope

- How

- How To

- HTTPS

- huang

- Hungry

- i

- if

- imagine

- immediate

- Impact

- important

- impressive

- improve

- improvement

- in

- increasingly

- industry

- inefficiencies

- informs

- Infrastructure

- Innovation

- Intelligence

- intend

- interconnected

- interest

- Interview

- introducing

- investment

- investor

- Investors

- isolation

- IT

- ITS

- Jensen Huang

- jpg

- just

- Keep

- Kind

- Know

- lab

- Labs

- landscape

- Languages

- large

- larger

- Last

- Last Year

- Latency

- Law

- leader

- leading

- learned

- least

- Led

- let

- like

- ll

- llm

- Long

- long time

- longer

- Look

- looked

- looking

- Lot

- Low

- lowering

- machine

- Machines

- made

- make

- MAKES

- Making

- manage

- many

- Market

- Market Leader

- mathematics

- Matter

- max-width

- maximum

- May..

- me

- means

- Meet

- Members

- Memory

- mentioned

- merely

- mesh

- meticulously

- Metrics

- million

- minimal

- mistakes

- model

- models

- Modern

- moment

- more

- most

- much

- multidisciplinary

- my

- Nasa

- Need

- needed

- needing

- networks

- Neural

- neural networks

- New

- next

- no

- node

- nodes

- note

- Notion

- November

- now

- nuclear

- number

- numbers

- of

- offer

- on

- once

- ONE

- only

- OpenAI

- optimizations

- Optimize

- Option

- or

- order

- ordinary

- Other

- Others

- our

- ourselves

- out

- outreach

- over

- Overhaul

- own

- ownership

- Pace

- paradigm

- Parallel

- parameter

- part

- partners

- parts

- past

- path

- pave

- People

- performance

- Phased

- pick

- Pivot

- Places

- plans

- platform

- Platforms

- plato

- Plato Data Intelligence

- PlatoData

- plus

- Point

- Point of View

- portable

- position

- positions

- positive

- possible

- Post

- potential

- potentially

- power

- powerful

- Practical

- pretty

- prevent

- primarily

- principles

- Problem

- problems

- processing

- Processor

- Product

- Production

- Products

- Program

- Programming

- programming languages

- projects

- properties

- proposition

- prospect

- Protein

- proven

- provided

- providers

- public

- purely

- purpose

- Pushing

- queries

- Race

- racing

- raise

- raised

- raising

- rarely

- Read

- really

- reason

- Recommendation

- redefine

- redesign

- reduce

- referring

- refined

- regarding

- reimagined

- release

- released

- rely

- requires

- resource

- Resources

- responsibility

- REST

- resulted

- Results

- retail

- right

- risks

- Risky

- roadmap

- robotics

- robots

- Roll

- roughly

- round

- rules

- running

- sacrifice

- safe

- Said

- same

- Save

- say

- saying

- scalable

- Scale

- scaling

- schedule

- scheduled

- see

- seed

- Seed funding

- Seed Round

- Seek

- seems

- seen

- selective

- semiconductor

- sense

- Series

- Series A

- serve

- served

- service

- service providers

- set

- several

- shaping

- shift

- should

- showcasing

- Sights

- significant

- signifies

- Silicon

- simulation

- simulations

- simulator

- simultaneously

- since

- single

- sizable

- Slide

- smaller

- So

- Society

- Software

- solution

- Solutions

- SOLVE

- solved

- Solving

- some

- Sound

- sounds

- Space

- space exploration

- Spatial

- specific

- specifically

- speed

- speeds

- stack

- Stacks

- staggering

- start

- started

- startup

- Startups

- Still

- Stop

- Strategy

- strides

- strong

- Struggling

- successful

- such

- suitable

- sum

- support

- Supports

- supposed

- surprise

- sustainable

- system

- systemic

- Systems

- tailored

- Take

- takes

- taking

- Talk

- tape

- Target

- targets

- team

- tech

- Technology

- than

- that

- The

- The Future

- The Landscape

- the world

- their

- Them

- then

- There.

- thermal

- These

- they

- things

- think

- this

- those

- though?

- thousand

- Through

- throughput

- time

- tires

- to

- today

- together

- too

- took

- Total

- tough

- towards

- track

- Trading

- Train

- Training

- trajectory

- transformative

- transition

- transitions

- Trends

- trick

- true

- truly

- try

- two

- types

- under

- understand

- unit

- unlocks

- upon

- us

- use

- using

- VALIDATE

- value

- various

- VC

- VC funding

- VCs

- very

- via

- viable

- View

- vision

- wait

- want

- Warehouse

- was

- we

- weight

- WELL

- well-defined

- went

- were

- What

- when

- whether

- which

- while

- why

- will

- with

- without

- words

- Work

- workflow

- working

- works

- world

- world’s

- would

- year

- years

- yes

- you

- Your

- zephyrnet